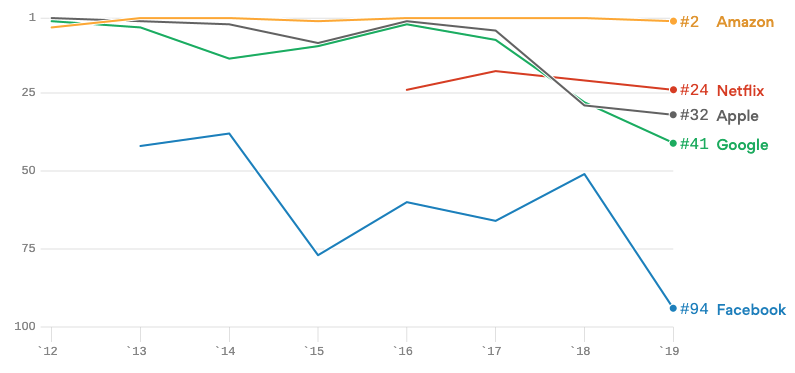

Nowadays, many companies made privacy one of their top priorities and, therefore, heavily invest in privacy-related initiatives. Companies not only do this because they are forced to by regulations such as the General Data Protection Regulation (GDPR) or the California Consumer Privacy Act (CCPA), but also to prevent data breaches. This is not surprising as data breaches could have a very significant impact on the reputation of a company. One example is the drop in Facebook’s reputation after the Cambridge Analytica data breach. Since the data breach in early 2018, Facebook has dropped from the 50th place of the most reputable visible U.S. companies to the 94th place (Figure 1). As such, companies should probably worry more about the business implications of data breaches than their legal implications.

But what are data breaches and how can we avoid them?

Data breaches explained

To better understand data breaches and their impact on the reputation of a company, it is worthwhile to take a deeper look at contextual integrity theory. In the academic literature, this theory is one of the most predominant theories to define and explain data privacy.

Very simply put, contextual integrity theory states that a person feels his or her data has been breached when his or her data flowed in a way that he or she feels is inappropriate. This also means that one person might feel that his or her data has been breached while another person does not.

Contextual integrity makes it clear that, unlike the name suggests, data breaches are not only about data security or the exposure of vulnerable data, but also about privacy expectations and preferences. In the light of contextual integrity theory, a key goal of privacy management is to make sure the information flows or is used in ways how the person expected it or preferred it to flow or be used.

The consent mechanism and its shortcomings

Consent is probably the most well known mechanism to elicit privacy preferences and to manage privacy expectations. Without delving into legal details, which can differ depending on the country you are living, consent basically works as follows. Before a person shares personal information with another party such as a company, the company asks the person for permission to use this data for a specified purpose. The person can then decline the request or give his/her consent. This means that the mechanism has two aspects: first, the person is informed about the purposes and second, the person agrees with the purposes or not.

Because the consent mechanism informs the person about the purposes for which his or her data will be used, the person is indeed, in a way, informed about the potential data flows. On first sight, the person’s consent could therefore be interpreted as an indication that the person finds the data flow(s) appropriate.

However, in several recent data breaches, such as in the Cambridge Analytica one, consent was asked and given, and still, the victims of those breaches had the feeling that their data was used inappropriately. This is an indication that the consent mechanism does not always lives up to its purpose. Indeed, researchers have acknowledged that, in the sense of contextual integrity, the consent mechanism has shortcomings that are too big to be overcome.

The following problems are just a few of the known shortcomings of the consent mechanism:

- The information is often hard to cognitively process

Our minds are simply not made to interpret abstract statements such as “your data will be used for marketing purposes”. For example, recently, a telco provider told us that they were analysing whether or not their customers had children and, if they did, they would present targeted tv ads for toys between 4pm and 8pm. Do you think that the customers of this telco thought about this when they agreed to share their data “for marketing purposes”? - The information is only displayed once

Consent is only asked before data is shared and is typically not limited in time. Hence, people tend to forget that they gave consent in the past. For example, do you know what companies you gave consent to process your data in the year 2010? - The information is displayed at a moment at which the user is in a weak position

Consent is typically asked before personal data is shared and this personal data is often required to benefit from a free service. At such a moment people tend to suffer from instant gratification bias. That is, we have the tendency to minimise future consequences and “cave in for short-term highs” which we will regret later on.

Fortunately, consent is not the only option that organisations have to manage the privacy expectations and preferences of the people on which they store personal details.

Complementing consent: continuous privacy feedback

One promising option that is complementary to the consent mechanism is the use of continuous privacy feedback. Simply put, organisations can give such feedback by logging for each person, each purpose for which their data was used (e.g. the purpose of determining whether the person has children). By doing so, the organisation can present these purposes to the person so s/he can report uses that s/he feels was inappropriate.

This way, people are constantly reminded that they’ve shared their data, get details on what their data was used for and are able to indicate (individually) whether or not they felt their data was used in an appropriate way. This means that the above issues of the consent mechanism are mitigated, that the meaning of data breaches in the sense of contextual integrity theory is taken into account and, therefore, that the risk of privacy breaches is lowered.

Positive side effects of continuous privacy feedback

Organisations that implement continuous privacy feedback will not only lower their data privacy risk, but can also encounter other positive side effects — mainly because they keep a log of the uses for which personal data was used.

Many positive side effects of keeping a log of data usage have to do with better data quality management and data governance. Data quality management would, for example, improve because when data uses are logged and thus known, the impact of errors can be better estimated. Data governance would benefit from logging because when an inappropriate data use occurs, the right person can be kept accountable.

Feasibility: Challenge and Opportunity

However, for organisations logging data uses and sharing those data uses with their customers or employees is not simple.

The challenges that organisations face are twofold. First, from an internally point of view companies typically store personal data in multiple disparate legacy systems which are not easy to interconnect. Second, from a societal point of view, multiple companies would display such information each on the person’s profile page on their own company website. The lack of a single view would make it very difficult for the person if s/he is connected with multiple companies. That is, the person has to remember with which organisations s/he is connected and has to look at all of his/her profile pages in isolation.

Be that as it may, organisations that aspire to be digital leaders should consider those challenges as an opportunity to gain more trust than their competitors and effectively create a long-lasting competitive advantage. This is because, despite the fact that technological competitive advantages are almost always temporary, their duration can be lengthened when competitors would have a difficult time to implement the same technology.

For the organisations that want to tackle the above challenges, a personal data web based on Tim Berners-Lee’s Solid specification would provide a great opportunity. This is because such a web can both interconnect legacy applications or databases internally and to share personal data with the outside world in a standardised form

Conclusion

As consent is not the holy grail of privacy management, companies should explore other complementary techniques for being more transparent towards their customers or employees. One promising option — which is both theoretically sound and complementary to the consent mechanism — is to log, for each user, each purpose for which his/her data was used and then let these users review the appropriateness of these purposes. By adopting this method, digital leaders can create long-lasting competitive advantages not only by lowering their risk of data breaches, but also by creating other positive side effects such as better data quality management and better data governance.

This article is also published on Medium.com.